"We're going to get to a point where you’re a business, you come to us, you tell us what your objective is, you connect to your bank account, you don’t need any creative, you don’t need any targeting demographic, you don’t need any measurement, except to be able to read the results that we spit out. I think that’s going to be huge, I think it is a redefinition of the category of advertising.” - Mark Zuckerberg, 2025.

When I first read this quote from Mark, I didn’t entirely understand what he meant. Advertising is a fantastic business that powers much of big tech's earnings. In Q4 2025, Google produced $75B in ad revenue, and Meta produced $58B. Annualised, that is more than half a trillion dollars of advertising spend every year. It doesn’t seem like there’s much to fix or improve on, given how well that engine runs. Sure, there are new places to advertise such as LLM interfaces that many are excited for, but there hasn’t been a huge amount of discussion on how the form factor of the advertisement will change, its just text/images/videos on a screen at the end of the day.

An unfathomable amount of money is spent on advertising every year, and that number will only increase as the engine of capitalism rapidly increases the amount of net new goods and products it can serve businesses and consumers alike (enabled by exponential advances in frontier technology e.g. AI). The goal of this article is to dive into the compounding advantages Meta has on advertising, the technological investments that it has made to get to this point, and what the future of advertising looks like.

The Best Advertising Engine You Know Nothing About

If there’s one thing about Instagram you have heard of or personally experienced, it is how good its advertisements are. There is no other app I use on a daily basis that instantly shows me an ad on my phone as I scroll through Instagram stories, based on something I searched on my laptop minutes ago or something I talked about in the past day. The ability for Instagram ads to “one-shot” someone is consistent and incredibly effective. At a surface level, I’m sure many of you have a broad theory on how it works. Meta collects data points across your devices and its own family of apps, somehow synthesizes that data to guess which ads it thinks you want to see, and displays those ads to you. But it’s so much more than that.

Lattice

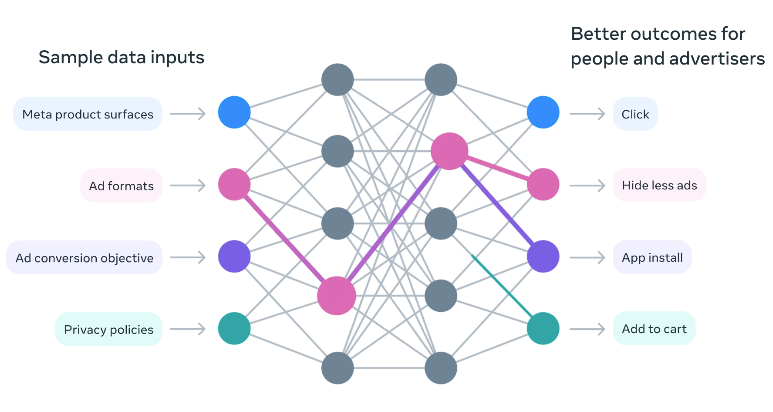

Meta Lattice, a new model architecture that learns to predict an ad’s performance across a variety of datasets and optimisation goals, was announced in May 2023. Before Lattice, Meta ran hundreds of separate deep neural network models, each independently optimised for different objectives, such as click prediction, conversion prediction, video views, and ad quality scoring. Each model was siloed, which, as you can imagine, limited the performance of Meta’s ads engine and made it that much more difficult to optimise for.

Lattice consolidated these hundreds of models into a single, unified architecture with trillions of parameters across thousands of data domains. Meta Lattice can understand usage and engagement patterns across different data sources, such as conversion rates and ad quality, and across multiple domains, such as the Facebook Feed and Instagram Feed, while learning to predict multiple outcomes simultaneously.

Lattice also enables Meta and its advertisers to produce more relevant ad recommendations for its products and surfaces through better generalisation, even when the end user has little to no usage history or data. In addition, the model understands different types of user behaviour and how they evolve over time, enabling advertisers to capture future demand and even predict different stages of a customer's purchase life cycle.

Importantly, only a small part of the model is activated for each prediction rather than the entire model (which has obvious benefits for inference compute requirements). This is compounded by the fact that Meta no longer has to separately train, serve, and optimise hundreds of models, greatly reducing the compute required for model training as well. Having a single but far more powerful model also makes it much easier for Meta to adopt future AI advances.

Sequence Learning

Meta’s second transformation, shipped in late 2024, was arguably more consequential. For years, Meta’s ad recommendation models relied on “sparse features” that powered its deep learning recommendation model. These sparse features captured aggregate signals about user behaviour, such as what ads a user clicked on in the last X days and how many page visits for unique pages in the last Y days. This data is useful, but for anyone doing product analytics, the real juice comes from understanding sequences of user behaviour, which sparse features do not capture. No activity is ever produced in isolation.

In addition, sparse features result in a loss of granular information, such as colocation of attributes that is aggregated across events, and a strong reliance on human intuition. Data always tells a story, but when events are viewed in isolation, it is incredibly hard to identify which factors are driving a material change.

Building on sparse features, Meta moved to a sequence-learning model powered by event-based features. Event-based features (EBFs) standardise heterogeneous inputs across three dimensions:

- Event streams: sequences of recent ads a user has engaged with/pages people like, or any activity across Meta platforms. This is logically a lot more useful than high-level aggregate activity information as it allows for a.) deep personalisation at an individual level of activity captured, and b.) to be built upon near infinitely with new event types.

- Sequence length: how many recent events are included in the stream. My assumption here is that, past a certain point, the extra events are not worth the extra data/compute required to process them and have greatly diminishing returns on how good the ad recommendation engine is.

- Event information: semantic and contextual information about each event, such as the ad category, time stamp, and ad length.

With these EBFs, instead of feeding the model a summary, the system now feeds it the raw sequence of a user’s interactions, every ad they engaged with, every page they visited, every conversion event, with all the semantic and contextual information.

The event model translates an EBF sequence into an event embedding sequence by combining timestamp encoding with synthesized event attribute-based embedding. Large Language Models use embeddings to represent words as a dense, multi-dimensional vector of real numbers e.g. [0,2, -0.4, 0.5] in a continuous vector space. Words with similar contexts or meanings are placed together. The same is taking place here for the events within a sequence.

These event embeddings are then fed into a sequence model, which is the centerpiece of Meta’s ad recommendation system. This event sequence model is a summarisation model for person level events, resulting in better personalisation and accuracy, recommending ads that might match a user’s current interests and intent, not past static preferences, and predict what a user might do next based on recent patterns.

Andromeda

Sound like a sci-fi spaceship? That’s what it is to Meta. Meta Andromeda is a personalised ads retrieval engine that leverages NVIDIA superchips with one singular focus, drive advertiser performance by delivering the most personalised ads to viewers.

The first step of the ad recommendation pipeline is retrieval. The system needs to narrow down tens of millions of eligible ad candidates at any given time to a few thousand relevant ad candidates, at which point more sophisticated models will step in to determine the final set of ads shown to users. It is hard to overstate the sheer volume of ads Andromeda has to process, handling three orders of magnitude more than subsequent selection stages. In addition, ads are selected on demand. It would be incredibly expensive if Meta maintained a list of ads for each of its 3 billion+ daily active users and had to refresh it constantly in anticipation of a user using one of its platforms.

Andromeda is Meta’s solution to the latency problem and the compute requirements of the retrieval stage of ad selection. Through a combination of deep neural networks custom-designed for Nvidia superchips and hierarchical indexing to support exponential ad creative growth, Andromeda efficiently scales retrieval models with sublinear inference cost. The model capacity is 10,000x Meta’s old retrieval model, and its design is optimised for AI hardware, minimising memory bandwidth bottlenecks (which has now become incredibly expensive).

Predictive targeting is incredibly computationally expensive, but dramatically improves advertiser outcomes. Andromeda improves the personalisation capabilities at the retrieval stage while significantly reducing the compute requirements. From an article in December 2024, Meta stated that “integrating Andromeda with MTIA and future generations of commercially-available GPUs will continue to push the boundaries of scaling retrieval – further improving advertiser performance and achieving what we estimate will be another 1,000x increase in model complexity.”, cleary stating why such fundamental model improvements are required if Meta does not want their capex and compute requirements to spiral out of control.

Generative Ads Recommendation Model (GEM)

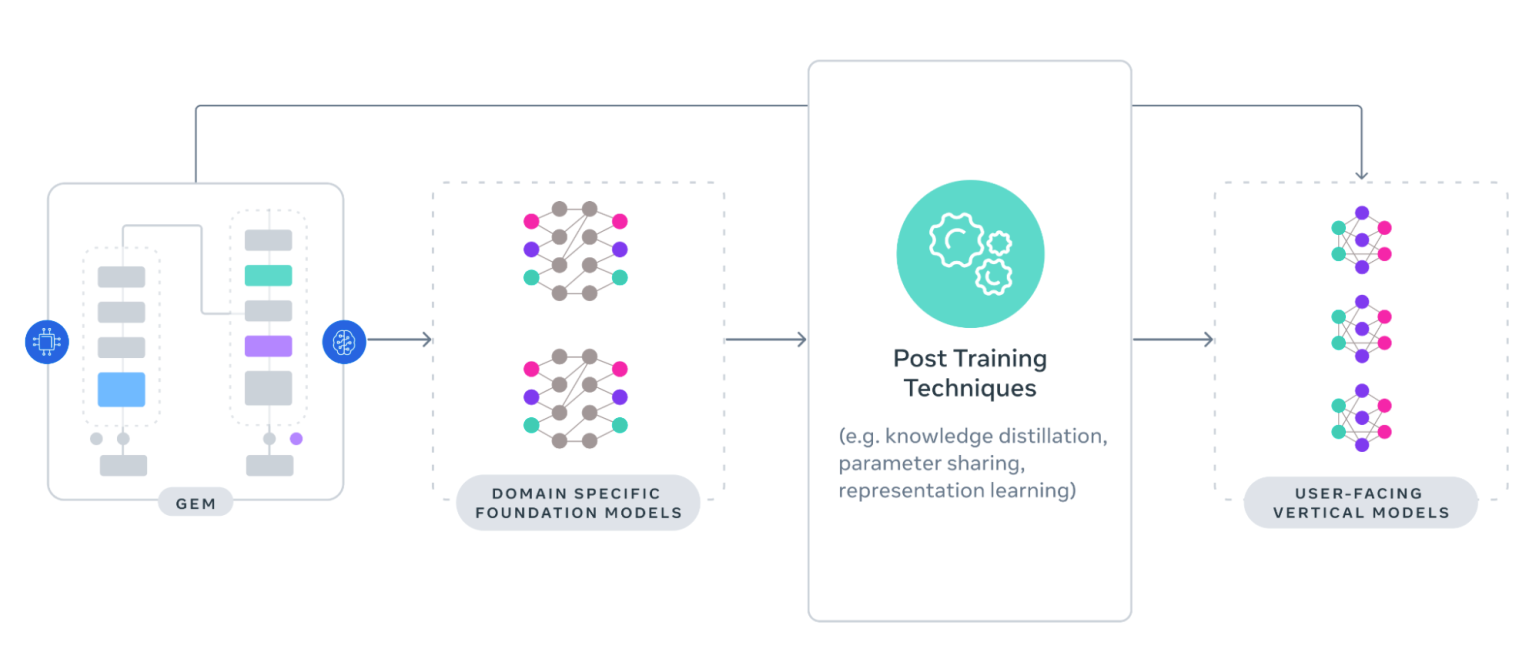

Lattice, Sequence Learning, and Andromeda have been the organs of Meta's ad recommendation system; the last component is the brain, Meta calls it their Generative Ads Recommendation Model. Announced in November 2025 and running since Q2 2025, it is the largest foundation model built for recommendation systems trained at LLM scale. Instead of generating text, GEM generates predictions, specifically which ad, shown to which person, at which moment, will produce the desired outcome. It is trained on ad content and user engagement data, which is categorised into two groups of features a.) sequence features such as activity history, and b.) non-sequence features such as user level attributes (e.g. age, gender, current location).

The challenges of building a foundational model for ad recommendation systems are non-trivial. It must ingest billions of user interactions across multiple platforms every single day, but high signal events such as conversions are sparse (a user can do many things after clicking an ad). It must handle an enormous amount of heterogeneity across the user behaviour, various delivery channels, all while factoring advertiser goals, creative formats, and more (it's quite often that an ad spender might not know what they are optimising for until they themselves gather more data, and even then, it's a never-ending game of optimisation on the advertiser side). Lastly, like any other foundation model, Meta must grapple with the limits of physics and software to ensure that the training and inference costs do not balloon out of control.

GEM is a foundational model that enhances other ad recommendation models' ability to serve relevant ads, and also propagates its learnings across Meta's entire ads model fleet. Your brain teaches you how to breathe most efficiently during cardio, how to best engage a muscle when lifting weights, and what is the best form to achieve a specific athletic move. GEM is that brain for ads, and it makes Meta's entire recommendation system a peak performance athlete that relentlessly improves.

The future of ad recommendation systems will be defined by a deeper understanding of people’s preferences and intent, far deeper than the human mind can comprehend today. GEM will learn from everything, including user interactions across all platforms, whether that’s an ad view or a feed item pause, and cover all content form factors, such as text, images, audio, and video. There is an unbelievable amount of nuance that simple algorithmic systems cannot synthesise, and GEM does.

GEM learns from cross-surface user interactions, while ensuring its ad predictions are tailored for each surface’s unique characteristics. One of Meta's benefits is its diverse product portfolio. Facebook, Instagram, Messenger, Threads, WhatsApp, each of these products allows users to interact with other users in different form factors, communicate in different ways, and have different types of content. However, that also makes advertisement recommendations an exponentially harder problem. Does the same user have different ad preferences on different platforms? Should the recommendation system show them the same ad on two different surfaces? Contrast this to say, a simple blog website which only has one ad type (e.g. text insert), there is much fewer vectors to optimise on.

Data is gold in the age of technology, and the flywheel for Meta is incredibly powerful. GEM continuously trains on fresh interaction data, generates updated knowledge, transfers it to production models, those models serve better ads, the resulting interactions generate better training data, and GEM gets smarter, ad infinitum.

To sum up everything we’ve talked about so far,

- Lattice: A new model architecture that predicts an ad's performance across a variety of ad datasets and optimisation goals. This enables Meta to bring together all their knowledge across previously disjoint data libraries and generalise it into a much larger model for their ad ranking architecture, delivering higher-quality ads with higher conversion rates. It helps Meta decide which eligible ads to show and in what order.

- Sequence Learning: A summarisation model for person level events, resulting in better personalisation and accuracy, recommending ads that might match a user’s current interests and intent. This allows Meta to predict that after you book a flight to Hawaii, chances are you might need some swimming shorts, and if you buy those, you might need some sunscreen, and if you buy that, you might be interested in some surf lessons. With this, Meta can understand current user behaviour and their user profile.

- Andromeda: A retrieval system that helps Meta’s recommendation system filter from tens of millions of ads into a few thousand ads. It helps Meta decide which ads are eligible to be shown.

- GEM: A foundational model trained at LLM scale to optimise Meta’s entire ads recommendation system. This is the super brain, like “The Entity” in the Mission: Impossible finale, which learns from all of Meta’s data and helps generalise it into a usable form for all downstream models. It helps other Meta ad models become more efficient and accurate, and propagate global learnings to the individual level.

The Manus Acquisition

All this sets the stage for the grand finale. But before that, a brief injection in the form of Meta’s acquisition of Manus AI for $2B. When I first saw the news, I thought this was Meta’s answer to OpenAI/Anthropic and their generalised LLMs, positioning itself as a foundation LLM model for both consumers and businesses/prosumers. However, upon writing this article, I now view the Manus acquisition through a completely different lens.

The Manus acquisition is a play for Meta to power the next era of advertising and build the best rails to do so. As much as AI automater wannabes will continue to build their own “crazy marketing workflow with Claude that fully automates [insert marketing function] for themselves, there is a whole swath of companies and employees that have no interest in doing that (software is not dead, it will just be incredibly vertically integrated).

Manus AI is the first AI with a native Meta Ads integration. You can connect your Ads Manager directly through Manus connectors, with pre-built skills for analysing ads, generating reports, auditing campaigns, and more. You can interact with every single feature of the advertising channel through natural language, and if you have ever tried to use a big tech analytics platform, you very well know how horrible most of them are.

If you followed Manus since its early days, one of the standout use cases was its marketing automation workflows. You will find countless blogs from marketers about all the marketing workflows and social media automation they build with Manus. Manus has continued to invest heavily in that vertical through educational courses and marketing data partnerships, such as its partnership with Similarweb.

Which brings me to the grand finale.

Advertising, Hyper Personalised, Fully Automated

There’s really only one major constraint in the entire Meta ad system: humans. Talk to anyone new to Meta Ads, and it is non-trivial to set up Meta Ads, from creatives, targeting, tracking, performance analysis, to figure out whether Meta Ads is a truly scalable marketing channel. At the other end, managing social media ads for large companies requires an army to constantly chase the marginal improvement in return on ad spend.

Every single AI/ML investment Meta has made in its ad recommendation system has culminated in one thing: the creative. With the adoption of incredible new gen-AI models that are rapidly improving and will ultimately be used to create and optimise ad creative content, the number of ad creatives in Meta’s recommendation system will grow exponentially.

As they say, “Creative is the targeting, and data is the fuel”.

Look at the status quo today. This Meta blog post teaches users how to create an Advantage+ Catalogue ad. You only need to choose an objective, turn on specific ads at the campaign level, create your ad set, choose your audience, choose any exclusions, set up your ads, choose if you want dynamic media, add optional dynamic overlays and image touchups, enter a destination, and set up tracking. This is NOT simple by any means. And mind you, Advantage+ is meant to be a suite of AI-powered automation tools to reduce manual setup and lower acquisition costs by automatically optimising targeting, placements, and budgets.

Let me help you paint a picture of what the future will look like. Meta Ads is fully integrated with Manus, allowing campaigns to be automatically created. Meta spends its announced $115-135B in capex for 2026. Meta 10,000x its model capacity and, with further technological advances across GEM/Lattice/Andromeda, understands 10x more about the user and what ads they want to see than before. Now for the fun part. Through all those predictive models, Meta generates the PERFECT ad for the advertiser for the individual user.

Imagine an ad with the perfect text on screen, the perfect image, the perfect video, the perfect audio, the perfect CTA, perfect everything. Not just for a subset of a generalised audience, but for an individual. A hypothetical example is a cozy winter sweater. Just for you, Meta is going to generate a video ad with the sweater in your favourite colour, overlaid with your favourite artists’ music, hitting an emotion with the CTA you didn’t even know you were feeling, with a male model that looks like your ideal man, sitting in a space that looks like your dream living room, all powered by Meta’s glorious capex and its vast amount of compute from data center buildout.

How much money Meta makes from advertising (which accounts for 98+% of Meta’s revenue) depends on two factors. Ad impressions and average price per ad. Consumers can be delighted to see the right ad, especially if it's something they genuinely feel like they need/want in that moment. Having the right products shown with the right creatives is the input for that. What determines how much an advertiser is willing to pay for an ad? At a high level, everything is a funnel. You want someone to net have the highest % conversion rate from viewing an ad to purchasing your product across the entire funnel. The higher the conversion rate (assuming a fixed price for your product), the more you are willing to pay for each ad shown to the end user.

In the not-too-distant future, every single person viewing an ad on Meta will get a perfectly tailored, completely distinct, hyper-targeted ad creative. I think the increase in ad impressions and average price per ad when this finally becomes reality is not in the single-digit percentage points per year, but rather in the double-digit percentage points per quarter. I would not be surprised if Meta had a full year where the average price per ad increased by >50% alongside an equal increase in ad impressions. In comparison, Meta’s price per ad increased by 9% YoY, and ad impressions by 18% YoY in 2025. If you think ads are hyper-targeted today, think again.

Stop for a second and imagine that across Meta’s 3.58 billion daily active users. Meta estimated that it shows 15 billion higher-risk/unwanted advertisements a day, which is projected to be 10% of its overall annual revenue. Naively extrapolate that and its 150 billion advertisements a day, or about 54 trillion advertisements a year (ChatGPT gave me an estimate of 10 trillion - 30 trillion ad impressions a year for Meta). Think about that scale required to produce 10 trillion video ads a year, and think about the amount of compute needed if every single one of those ads was generated using gen-AI models from scratch to be perfectly tailored to the end viewer from scratch in real time.

Another overlooked point is Meta’s smart glasses. Meta Raybans have sold over 7 million units in 2025, tripling from the 2023-2024 period. It holds a 73% market share in the smart glasses market. Think about the data Meta has from video footage of people wearing the Meta Raybans. You walk into a shopping mall and instinctively or subconsciously turn your head to look at a storefront. That’s a data point. You spend a few extra hundredths of a second looking at a product of a specific colour. That’s a data point. You spend your time doing certain activities and recording them. That’s a data point. All of these platforms and the unique data that Meta is able to collect and feed into their entire ads recommendation system, and leverage the full power of AI + compute, are almost unmatched by any other company.

The street is worried there will be no demand for all the compute build out from hyperscaler capex. It is obvious to me that Meta is different in that it will be the largest consumer of its own compute from the sheer scale of their advertising business, from creative generation to ad recommendation systems. The return on investment will not come from consumers paying more to Meta via their generalised LLMs; it will come from incredible top-line growth to their advertising business, which will grow at an unprecedented speed. There is an ungodly amount of money spent on Meta Ads agencies by companies that do not want to figure out Meta Ads, go through the hassle of creating ad creatives, and manage the end-to-end campaigns. We will see a near-complete removal of the human touch on ad buying, and all that surplus accrues to Meta.

Final Thoughts

Advertising powers the global economy. Without it, consumers would not be able to find products they want. Without it, businesses would not be able to sell their products to consumers. Without it, we would lose trillions in equity value. Advertising makes the world a better place.

For the past 10 years, advertising has looked the same. The same channels, the same creatives, the same content types. AI brings about a paradigm shift unlike any advertisement engine has seen before, the ability to combine deeply personal ad insights and predictive mechanisms with on-demand hyper-customised ad creative generation.

The world will produce exponentially more goods and services than ever before, enabled by the leverage AI provides. There will be a future where any individual selling something will be able to go to Meta, upload some product details/images, and allocate some money, click “advertise my product” and the engine of Meta’s ad recommendation system will roar to life as hundreds of MWs worth of compute will buzz along.

You will get one shot by more Meta ads, and you are going to absolutely love it.